David Clift

FirstEDA

David Clift

FirstEDA

Don’t worry; Aldec’s Riviera-Pro is not up before Judge Roy Snyder, but is now runnable in a Docker Container. I can already sense some of you thinking, what is Docker, and why would I want to use it?

At FirstEDA, we believe that having the correct methodology is critical to successful design and verification. Whether using statement or functional coverage, having the right verification methodology, be it OSVVM, VUnit, Cocotb or UVM, is crucial in achieving the best out of open-source tools like Jenkins, QEMU or Oracle’s VM VirtualBox. Over the years, we have conducted webinars, and published conference papers, blog posts and technical articles on most of these. So, returning to the question, “What is Docker and why would I want to use it?”:

Docker has emerged as a game-changer in the dynamic software development landscape, revolutionising how applications are built, shipped, and deployed. Like so many tools that our software development colleagues use, we can benefit from them when verifying our RTL designs. Employing Docker allows you to quickly deploy consistent, scalable, and rapidly deployed verification environments using containers that have lower overhead & faster startup times compared to traditional VMs.

If you’re new to the world of containers and wondering what all the buzz is about, you’ve come to the right place. In this blog post we’ll unravel the mysteries of Docker and explore how it can simplify your verification workflow.

At its core, Docker is a platform for developing, shipping and running applications in ‘containers’. A container is a lightweight, standalone, and executable package that includes everything needed to run a piece of software, including the code, runtime, libraries, and system tools. Containers ensure your application runs consistently across different environments, whether a developer’s laptop, a testing server, or a production system.

Before we dive into how to use Docker, let’s understand some key concepts:

With the release of Riviera-PRO 2023.10, Aldec can provide a Dockerfile, which you can use after a small amount of configuration to build your own Riviera-PRO Docker image. Currently, you must email sales@aldec.com or file a support ticket via the Aldec Portal to gain access to the Dockerfile and associated files.

The first step is to install Docker on your machine. Installation instructions for various operating systems are on the official Docker website (https://docs.docker.com/get-docker/).

Currently, I have only tested this on Linux.

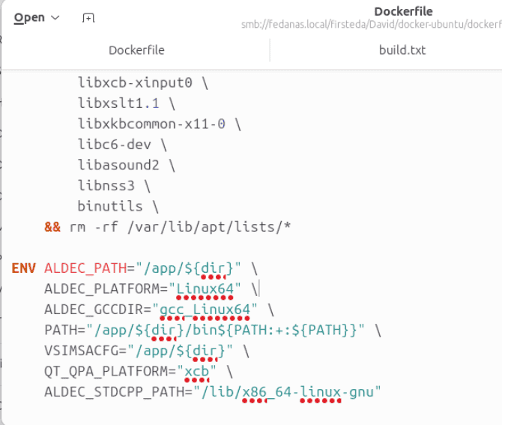

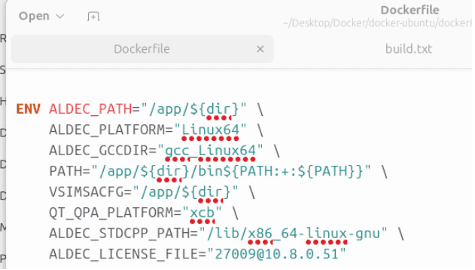

Aldec supplies two files for setting up Riviera-PRO to run in Docker: The Dockerfile itself and build.sh, which is used to automate the building of the Docker image. We first need to add licensing details to the Dockerfile, so open the Dockerfile in your favourite text editor.

The Dockerfile contains the instructions to build the Docker image, and it starts with the image for Ubuntu 23.04 with the FROM command. It then adds the prerequisites for Riviera-PRO with the RUN command. Next comes the required environment variables with the ENV command and, finally, the Riviera-PRO executables with the COPY command.

Add the ALDEC_LICENSE_FILE environment variable to the ENV section as below:

Ensure the TCP port and IP Address are correct for your network license. Save the edited Dockerfile.

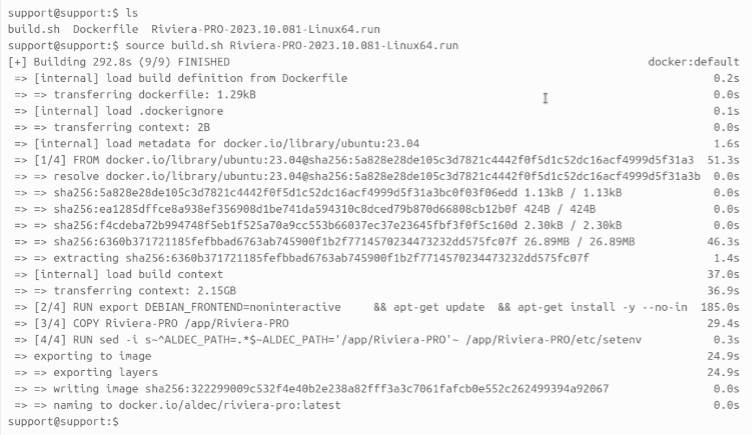

Copy the Riviera-PRO 2023.10 Linux installer to the same folder as the Dockerfile, then run the build script:

source build.sh Riviera-PRO-2023.10.081-Linux64.run

The script will execute and call docker build; this will take a few minutes.

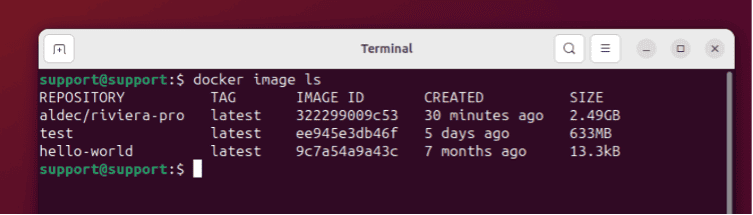

Use the following command to see your available Docker images:

‘aldec/riviera-pro’ is the image that was just created.

To use the image, we use the docker run command as below:

docker run –rm -v /$(pwd)://work -w //work aldec/riviera-pro sh -c ‘vsim -c -do runme.do’

Where:

Because we bound a local host folder to the container containing our design and verification environment, once the verification run is finished, all the results are left on the local machine, and the container is removed automatically.

While Docker and Virtual machines are both technologies used for virtualisation, they have different architectures and offer distinct advantages. Here are some benefits of Docker over traditional virtual machines:

In this brief guide, we’ve only scratched the surface of what Docker can do. As you continue your Docker journey, you’ll discover more advanced features, such as Docker Compose for managing multi-container applications, Docker Swarm for orchestration, and more.

Hopefully, you found this information valuable and helpful, and to paraphrase Judge Roy Snyder, “Engineers will be Engineers”.