David Clift

FirstEDA

David Clift

FirstEDA

Like many tools we use in the development and maintenance of our HDL code, lint tools (or ‘linters’) are another method we have co-opted from our software development brethren

—

Please don’t get me started on the “Is HDL software or hardware?” subject! It’s a means to a hardware result, so it’s hardware!

Named after the Unix utility for checking software source code, linting has become the generic term given to design verification tools that perform a static analysis of software. By using a series of rules and guidelines that reflect good coding practice, common errors that tend to lead to buggy code can be caught earlier by static analysis.

Linting is one of the most established static verification technologies. The broader concept having been originally developed at Bell Labs, to check C code in the 1970s. When these rules are violated, the lint tool flags the potential bugs within that code for review or waiver by the design engineer.

In the hardware-design space, linting is typically applied to hardware description languages (HDLs) such as VHDL, Verilog and SystemVerilog, prior to simulation. It is good engineering practice to ensure that HDL code is clean before entering the increasingly lengthy synthesis and timing closure process. In this respect, lint tools are becoming more widely used to remove potential mismatches between RTL and post synthesis simulation results.

Because linters are static tools, their tests can be performed without the need for test vectors; which allows for very fast results. One criticism of such tools, is that they can be overly noisy if not setup and configured correctly. It is worth noting that the usefulness of a basic lint tool will vary depending on the language you are using. For example, if you are using a strongly typed HDL such as VHDL, the compiler will catch most issues for you. But the same cannot be said for Verilog. Take for example this section of code for a Johnson counter:

`timescale 1ps / 1ps

// Verilog model of 8 bits Johnson’s Counter with

// synchronous Reset

module JOHNSON8 (CLK, RESET, Q);

input CLK;

input RESET;

output [7:0]Q;

reg [7:0]Q_I;

reg tmp ;

integer I;

always @ (posedge CLK or posedge RESET)

begin

if (RESET) // asynchronous reset

for (I = 0; I <= 7; I = I + 1)

Q_I[I] = 1’b1;

else // active clock edge

begin

tmp = ~Q_I[7];

for (I = 8; I >= 1; I = I – 1) // shifting bits

Q_I[I] = Q_I[I-1];

Q_I[0] = tmp; // inverted feedback

end

end

assign Q = Q_I;

endmodule

The above code will compile without any errors or warnings, but as you can see by inspection, the shift bits loop will write to the nonexistent Q_I[8] bit. Potentially this array overwrite will not be detected during simulation and the issue may not become visible until there is a random failure in the field. Running the code through a linter revealed the following:

Warning: ../src/JOHNSON8.V : (23, 8): index 8 is out of range [7:0] for ‘Q_I’

This makes it clear that there is an issue in the code that requires fixing.

Design Rule Checking (DRC) is a superset of the tests performed by a linter. So, a DRC tool will not only check that all the syntactic and semantic errors are eliminated from your code, but it will also analyse your code to see that it follows certain ‘good practices’, helping you to eliminate known issues. This gives us a first difference between the two types of tool; typically tests in a DRC tool will need some configuration before use, for a linter you would only typically need to enable a specific rule.

Another feature of a DRC tool is the configuration, not just in what each test checks, but in what aspects we check in the design. This allows users to build comprehensive checks that form part of the design review process. A DRC tool does not replace design review, but augments it by automating some of the more dreary parts of a design review. For example, a DRC tool can check the constant capitalisation of your data objects far more accurately and quickly than any engineer. The other advantage of capturing all your good engineering practices in a DRC tool, other than its speed of analysis, is that it documents all the successful tests, and more importantly all those that fail.

So what type of test can a DRC tool perform? First, we have the simple code formatting type checks:

Those are just a few examples of simple checks, we then also have more design orientated checks. Design orientated checks could enforce implementation of good engineering practices, or remove common mistakes that lead to mismatches between pre and post synthesis simulation results.

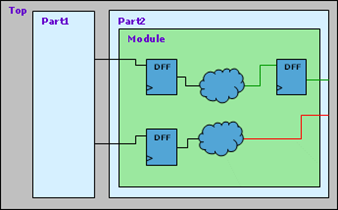

Figure 1 – Unregistered module outputs

For example, looking at figure 1 we can see that the lower output is not registered. Whilst this will probably not cause any functionality issues, getting to timing closure could be another matter. It is accepted as good design practice to register both the incoming and outgoing signals from a module, to help with timing closure.

So, we can see that whilst both HDL linting and DRC tools bring advantages to the design process, DRC with its wider scope of analysis types can be viewed as the superior technique. It is important with DRC however, to ensure that the rules being checked are correctly configured and that the tool is properly deployed to be used throughout the design process. Not only will a good DRC tool aid greatly in spotting issues early in the design phase, but (and often of equal importance) it will also document what rules were tested, which failed and if any where waived by the user.

As an HDL developer, what method should you use? Well, personally I would want to use both lint and DRC. In fact, this is a sentiment that many of our Sigasi and Aldec customers share. Particularly those operating in markets such as milaero and automotive, where safety and security are key and where stringent requirements make it necessary to ensure a design is checked as thoroughly as possible.

Sigasi Studio not only gives me type time semantic and syntactic checking, it also has a built in linter. Any errors detected are highlighted in the code, as well as in the tools problems panel. With their direct integration, Sigasi Studio and ALINT-PRO is a powerful combination. Using the two together will ensure that all lint and DRC errors that apply to single files are detected and removed during the code design phase, and for more complex tests that require the whole design hierarchy to be checked, Sigasi Studio builds the full ALINT-PRO project for you as you develop your code. Add a class leading HDL simulation and verification platform such as Aldec’s Riviera-PRO into the mix as well, and you will have a very capable HDL development environment indeed.