Katarzyna Hrynkiewicz

ALDEC Software Quality Team Leader

Katarzyna Hrynkiewicz

ALDEC Software Quality Team Leader

When I enter the word “assertions” into a search engine I get lots of results, including articles, books, courses, and tools

—

Nothing unusual there, as assertions have been present in the EDA industry for many years. They considerably increase the power of design verification and should, quite rightly, be popular. But the hits I notice occur frequently have headers such as “Assertions do not need to be difficult”, “Easy to learn assertions”, and “Assertions for dummies”, suggesting that assertions are still seen as difficult, and that engineers need some help and encouragement to start using them. At Aldec, we have something which helps overcome any reservations engineers may have towards using assertions.

Usually, the first problem is with the translation of the specifications into assertion formulas. In assertion languages we have a choice of a wide array of different operators, but all of them are simple and refined. We select some operators and arrange them into a formula describing a scenario of events, as required by the specification. Having done this, we can compile our code – which is when things get tough. For example, if we run a simulation and there are no assertion errors, what does that really mean?

Is it possible that the design is free from errors, even though we have just started the verification? Unlikely. Maybe there are errors in the assertion formulas? Or maybe the testbench is weak and the situations modelled by the assertion formulas are not checked at all? Alternatively, what if the simulation results console is filled with failure messages in red? This is particularly alarming if the design outputs seem to be correct and the waveform looks OK at first glance. So what is wrong? What should we check next?

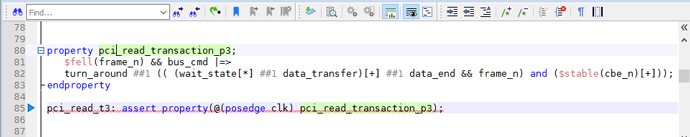

Let’s imagine the following assertion from a PCI-based design.

This assertion uses the possibilities of SystemVerilog Assertions language to model some freedom of behaviour in a transaction. It allows for many possible scenarios of events in one formula; as the [*] operator means that the event can be repeated zero, one or more times, even at every clock cycle until the end of the simulation. Similarly, the [+] operator allows freedom. So, the sequence creating the main part of the assertion can have many alternatives. This freedom is great from a coding perspective but can make the debugging of any suspicious behaviour extremely difficult.

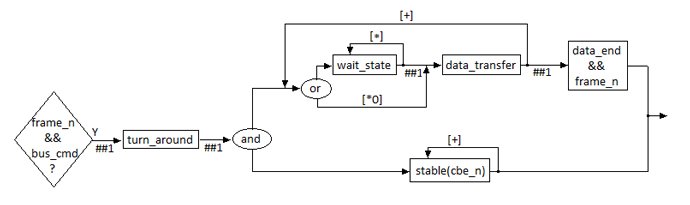

For fans of block diagrams, the assertion could be represented as follows:

Firstly, we should address the phase in which frame_n falls and a set of different signals (named here as bus_cmd) creates the start condition. Next should be a single cycle followed by the data transfer. The data can be transferred, or the wait state inserted. This behaviour can be repeated. The signal cbe_n should be stable during the whole data transfer.

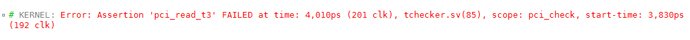

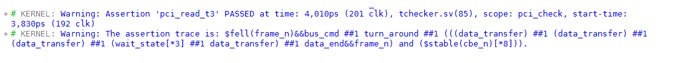

The trouble is that we get the error message:

We know something in the transfer is wrong, and we know when the failure occurred, and which assertion reported it. But what’s next?

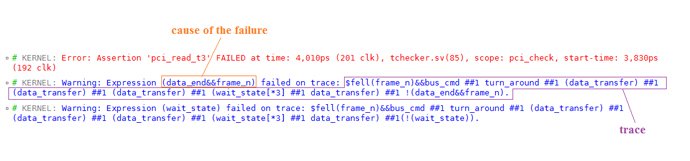

Cause and Trace reports can help us. They can be enabled during any simulation that uses assertions. Cause report points the direct reason of an assertion failure. In our example it is a Boolean expression which is false and results in an error. Trace report shows how the assertion evaluates in a process of time until the moment of failure. The way in which the assertion travelled is presented in a form of sequence.

In our example, the address phase with falling edge of frame_n && bus_cmd is done correctly. After this, the address phase is a single turn around cycle. Then, in the data phase, after three data transfers and three wait states there is another data transfer and then, in the next cycle, the correct end of transfer is missing. In the first Trace report, the expression (data_end && frame_n) terminating correct transfer is denoted with the “!” symbol, so it means it is false – i.e. it does not hold. The second Trace report suggests the problem with continuation of the data transfer, the wait state which could happen (as an alternative) instead of the data transfer is also missing.

With Cause and Trace reports enabled we know what happened step-by-step in each clock cycle when the assertion was checked. We don’t have to search for the expression values on the waveform. We know what is missing to finish the transfer correctly.

Of course, the Cause and Trace reports don’t tell you what line of code has caused this failure, but it gives you a good starting point to find the source of the issue. Using these debug reports cuts the time of analysing what is expected and what is simulated. We can find the bug faster, and we can quickly check what happens after fixing the bug. During the simulation, Pass results can be enabled and the Trace reports for passed assertions can be checked.

We can see cycle-by-cycle that the design behaves as required by the specification and finally gives a Pass result. We are sure that the testbench has enough stimulus to check this scenario. It is also easier to prepare more stimuli and check other alternative formulae; and the coverage of the testbench can increase.

Our example assertion was written in SVA but note that PSL fans can also use Cause and Trace reports for their assertions.

I sincerely recommend our Cause and Trace reports both for SVA and PSL for beginners and experienced users. Verification using Cause and Trace reports makes verification life much easier.

To enable the Cause and Trace report, refer to the assertion trace command in the Active-HDL or Riviera-PRO Product Help for more details.

Products:

Active-HDL

https://firsteda.com/products/aldec/active-hdl/

Riviera-PRO